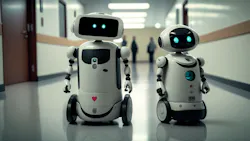

Every time I attend a session at a conference or a webinar on artificial intelligence technologies in healthcare, a journalist or two will ask, “Are robots going to replace humans?” This generally gets a chuckle from attendees and/or presenters and is followed by a response that says technology is there to assist clinicians and hospital staff, not replace them.

These conversations usually get me thinking about how far the healthcare industry has come in the past 100 years, and how some advancements that we take for granted today were truly groundbreaking at the time.

Before 1923, scarlet fever was decimating the health of individuals of all ages—those who contracted the disease could suffer blindness, deafness, heart and kidney conditions, and permanent paralysis. A yellow flag and printed notice were usually posted outside the home of an afflicted individual to warn visitors of the danger. Yet, in 1924, a serum that battled the disease was introduced by husband-and-wife researchers George and Gladys Dick, but there still was no cure.1

On February 12, 1941, Albert Alexander, became the first recipient of the Oxford penicillin. (In 1928, Dr. Alexander Fleming of London noticed mold growing on a Petri dish of Staphylococcus bacteria and the mold was preventing bacteria around it from growing.) Alexander was a 43-year-old policeman who scratched his mouth while pruning roses and developed an infection affecting his eyes, face, and lungs. Howard Florey, Ernst Chain, and their colleagues at the Sir William Dunn School of Pathology at Oxford University turned penicillin into a life-saving drug. Their work on the purification and chemistry of penicillin began in 1939. Unfortunately, Alexander died a few days later, as supplies of the drug ran short.2

Fast forward 20 years later, and the pharmaceutical industry has essentially taken off. In the 1960s, new drugs like the contraceptive pill, Valium, Librium, blood-pressure drugs, and other heart-helping medications were marketed. Additionally, people, particularly in the U.S., were demanding more access to healthcare and protection against unsafe medications.3

Another 20 years later, in 1982, physicians at the University of Utah Medical Center in Salt Lake City successfully implanted a permanent artificial heart in a 61-year-old patient.4

As for a more recent development that seems commonplace today, we have the electronic health record (EHR). In 2009, the Health Information Technology for Economic and Clinical Health (HITECH) Act, enacted as part of the American Recovery and Reinvestment Act of 2009, was signed into law on Feb. 17, 2009. The act essentially motivated healthcare organizations to implement EHR systems to improve the accuracy of health records and accessibility to patients. The HITECH Act included incentives to purchase certified EHR systems as well as authorizing Medicare and Medicaid to provide payments to hospitals and physicians who demonstrate “meaningful use” of EHRs.5

Finally, in 2023, Healthcare Purchasing News named a new Editor-in-Chief, which is just one reason why I am writing to you. I’ve spent most of my career in healthcare journalism and have become incredibly passionate about all things related to our industry. My promise to you, our readers, is to keep an eye on the ever-evolving healthcare landscape and continue delivering the high-quality content you have come to expect from HPN.